CodeRabbit matters because review is where many teams lose speed. Its official positioning around AI code reviews and context-aware PR feedback puts it in a different lane from code generation tools.

It suits development teams that work in pull requests and need better review coverage, clearer explanations, and faster feedback loops. If PR review is a recurring bottleneck, CodeRabbit is operating on a real pain point.

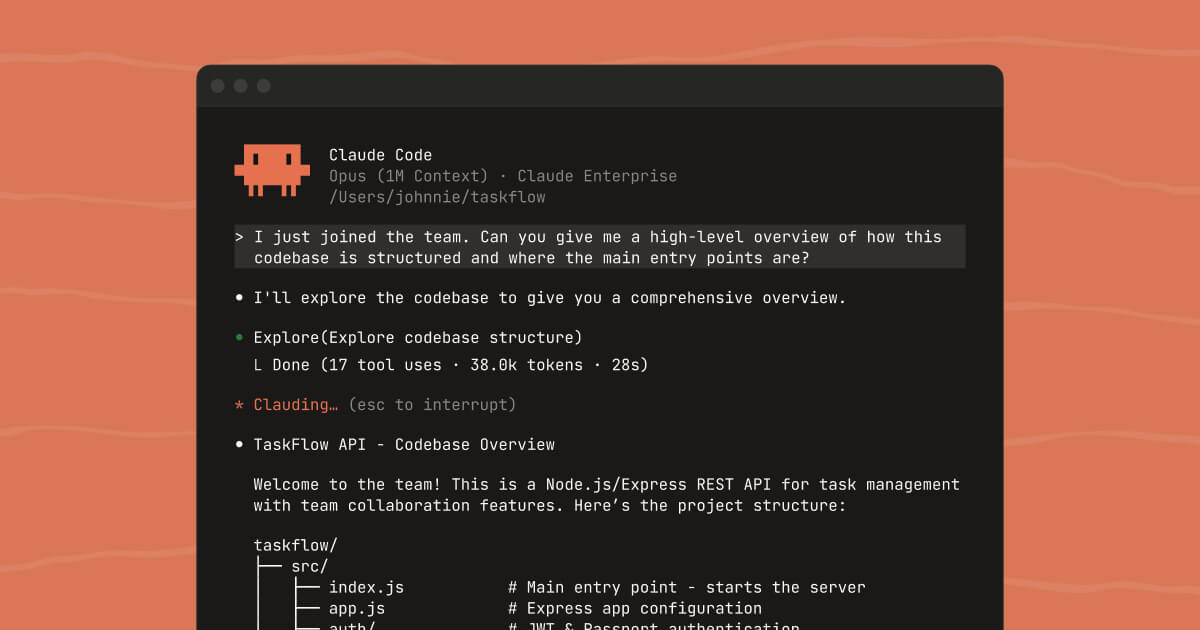

The value is collaboration support. A reviewer agent that can point at lines, explain issues, and keep context in view can reduce back-and-forth on routine review work, especially in active teams with many concurrent changes.

The tradeoff is that AI review comments are only helpful when they remain relevant and accurate. Teams still need human reviewers to prioritize, validate, and decide what actually matters for the codebase.

A practical first test is to run CodeRabbit on a real PR and compare the feedback quality with your normal review flow. If it shortens the path to actionable review without creating noise, the product is doing meaningful work.