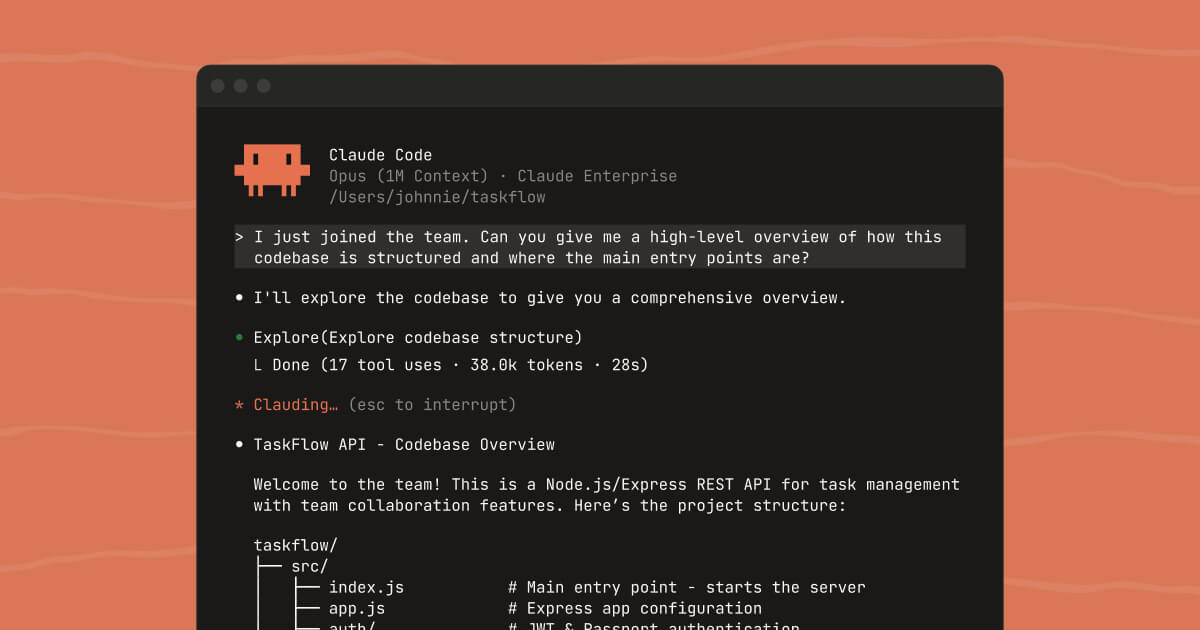

Dakou matters because many AI coding tools still depend on active user babysitting. The official positioning describes an asynchronous agent product with an independent cloud sandbox that can handle software development, product research, and report generation, which points to a longer-running execution model than ordinary coding chat.

It suits developers, technical leads, independent teams, and builders who regularly juggle multiple tasks and would benefit from offloading bounded work into a separate execution environment. If your pain point is task throughput and context switching, the platform’s direction is meaningful.

What makes Dakou worth attention is the attempt to move beyond instant suggestion into asynchronous progress. A cloud sandbox with parallel task handling can matter when the work includes coding, verification, analysis, and output generation that do not all need to happen under direct supervision every second.

The tradeoff is that longer workflows create more places for mistakes to hide. Async execution does not remove the need for code review, testing discipline, dependency checks, and deployment caution. The right expectation is better task advance, not automatic software delivery.

This site recommends Dakou for users who want AI to keep pushing development or research tasks forward between check-ins. If your workflow suffers more from interruption and handoff cost than from pure typing speed, it is worth serious attention.